01

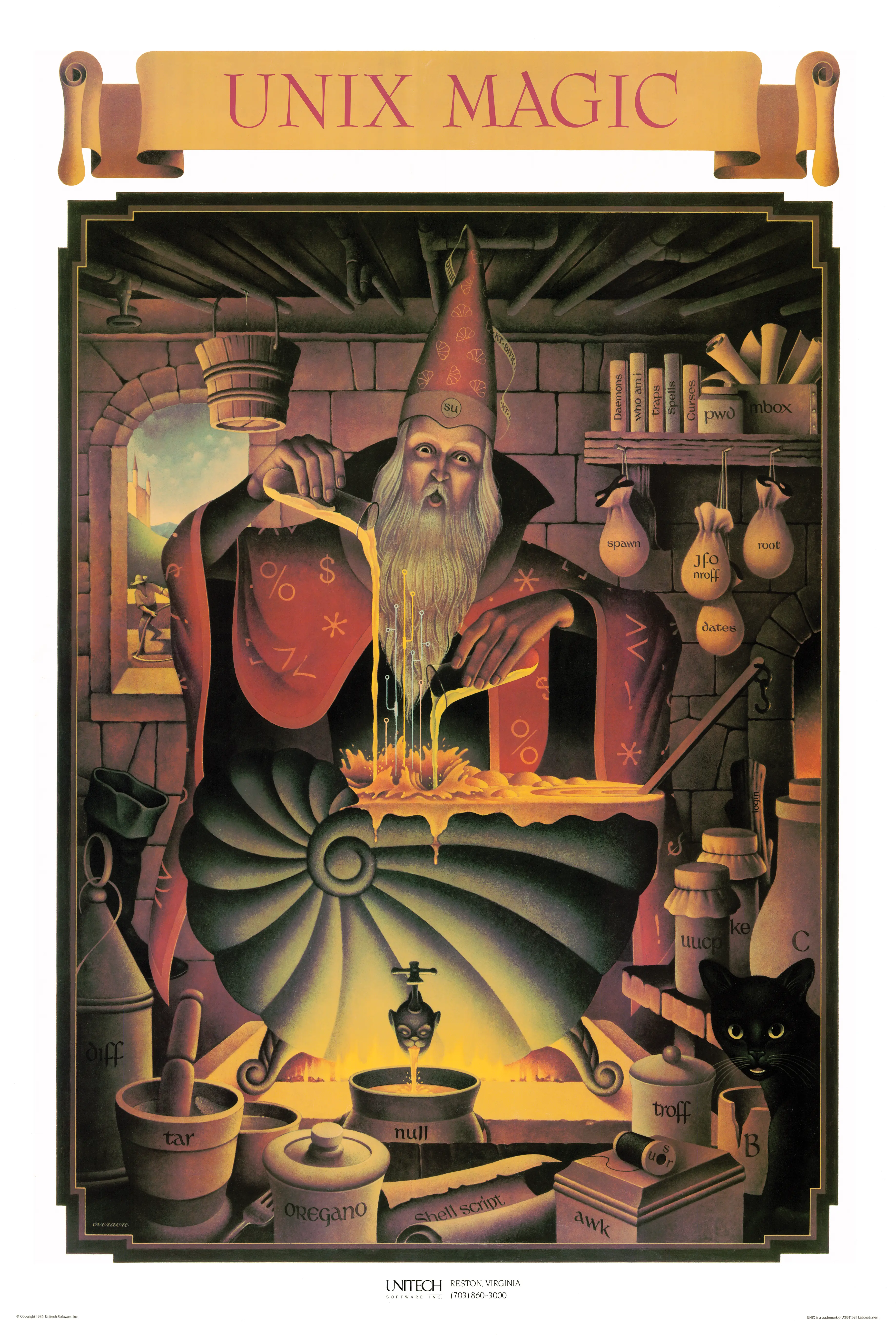

Shell

10

null

11

Oregano

12

tar

13

fork

14

Shell script

15

AWK

16

spool

17

troff

18

B

19

cat

02

man

20

uucp

03

Pipes

04

Memory leaks

05

dmr · kt · bwk

06

The c programming language

07

Backpressure

08

Daemons

09

su

21

boot (or sock?)

22

make

23

spawn

24

nroff

25

root

26

dates

27

whoami

28

pwd

29

mbox

30

login

31

spells

32

curses

33

diff

34

traps

35

Shell's symbols

36

Overflows

37

tee

38

Filesystem hierarchy

39

skull

40

banner

41

wall